721 reads

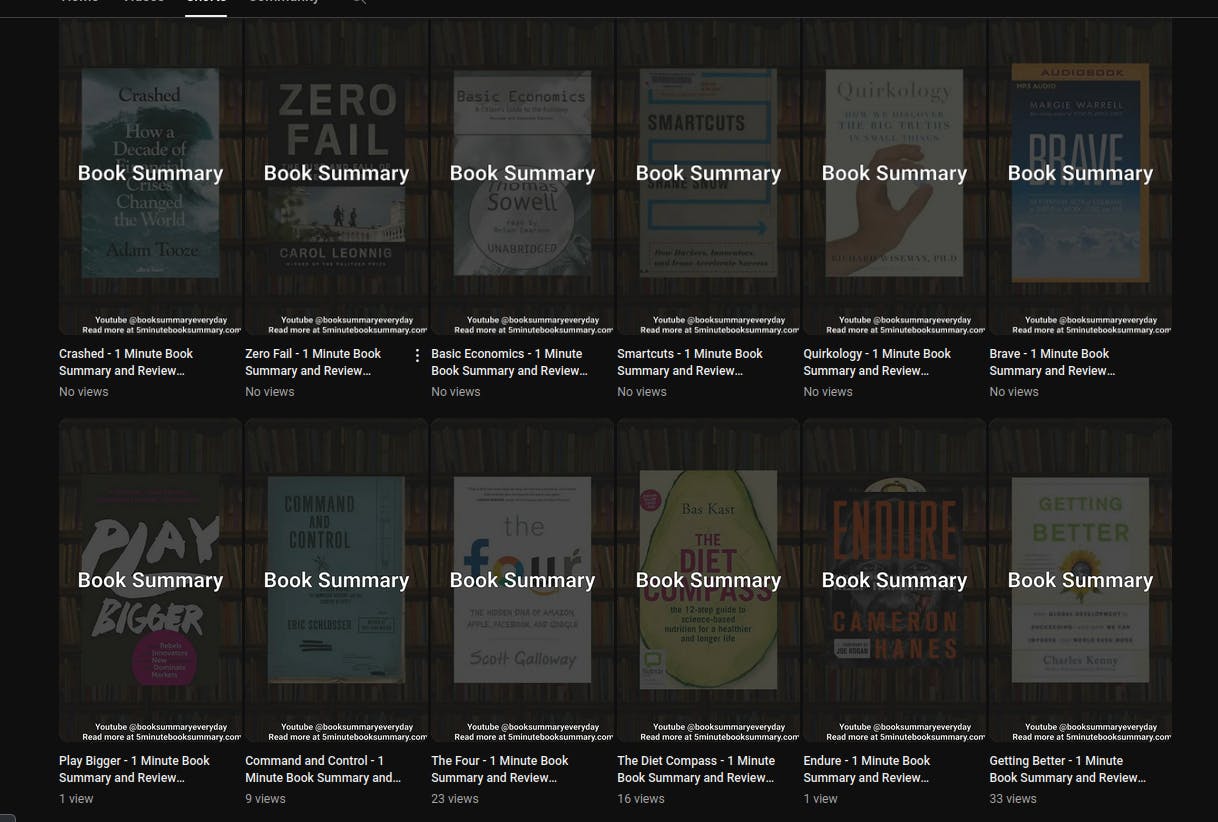

Using Free AI Tools to Create a 100% Automated Youtube Shorts Channel

by

May 9th, 2024

Check out my thing where I round up the best articles for entrepreneurs on the web https://thesolofoundernewsletter.com/

About Author

Check out my thing where I round up the best articles for entrepreneurs on the web https://thesolofoundernewsletter.com/