934 reads

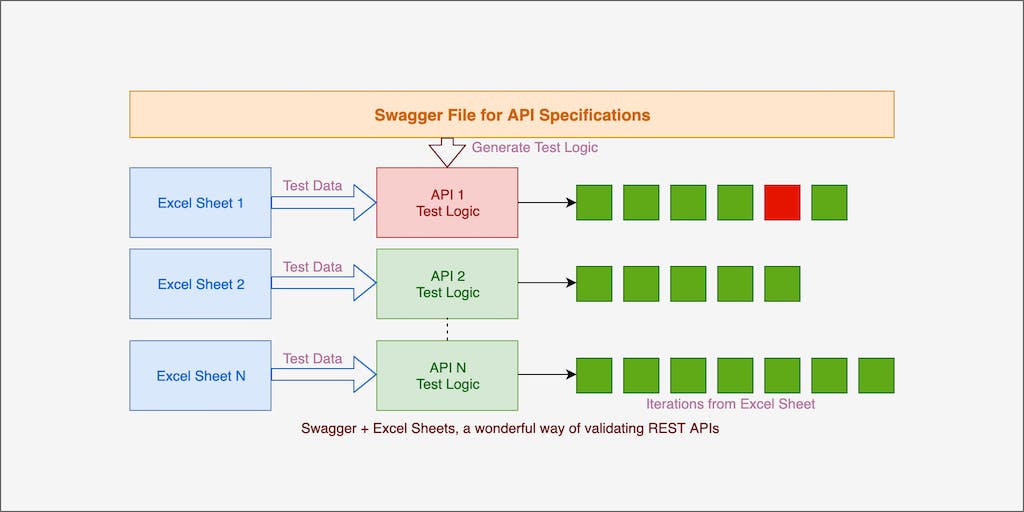

Using Swagger And Excel Sheets for Validating REST APIs

by

January 13th, 2020

* Creator of vREST NG * API Automation Expert * Passionate about Yoga and Kalaripayattu

About Author

* Creator of vREST NG * API Automation Expert * Passionate about Yoga and Kalaripayattu

Comments

TOPICS

THIS ARTICLE WAS FEATURED IN

Related Stories

API Choice Overload

May 05, 2021