2,259 reads

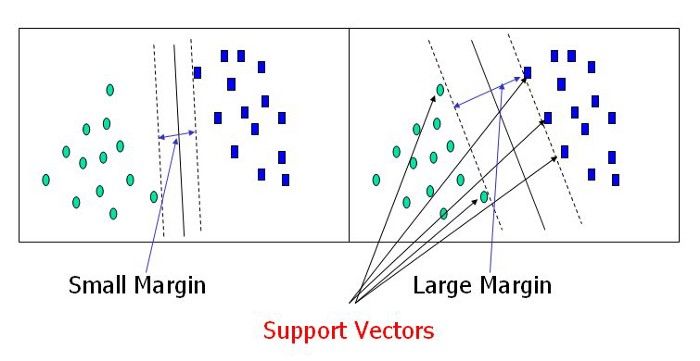

Building Handwritten Digits Recognizer using Support Vector Machine

by

October 17th, 2020

Audio Presented by

Self Taught Machine Learning Engineer and Data Scientist.Love Data driven problem and AI,ML and DS.

About Author

Self Taught Machine Learning Engineer and Data Scientist.Love Data driven problem and AI,ML and DS.