171 reads

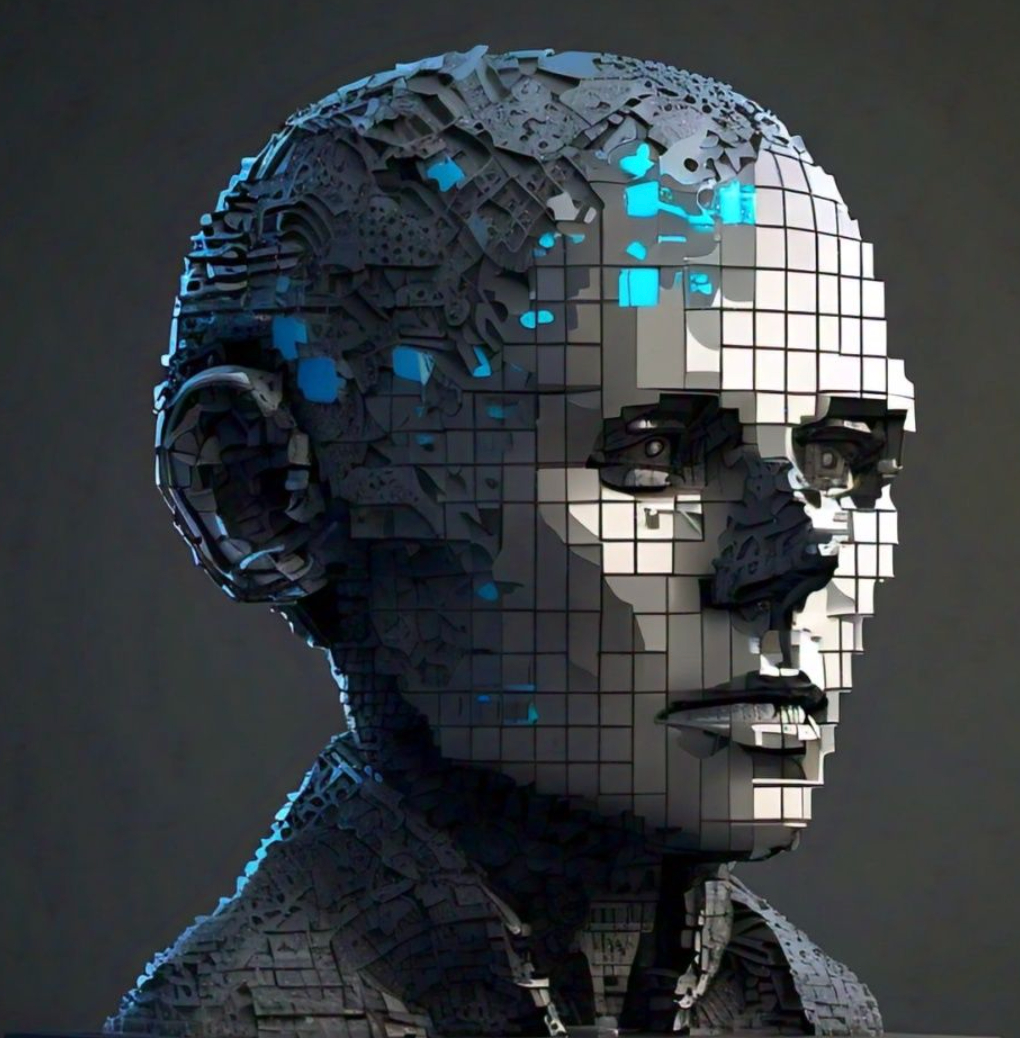

Addressing Kevin Mitchell’s Questions From the Perspective of Conscious Turing Machine Robots

by

September 4th, 2024

Audio Presented by

AIthics illuminates the path forward, fostering responsible AI innovation, transparency, and accountability.

Story's Credibility

About Author

AIthics illuminates the path forward, fostering responsible AI innovation, transparency, and accountability.