2,033 reads

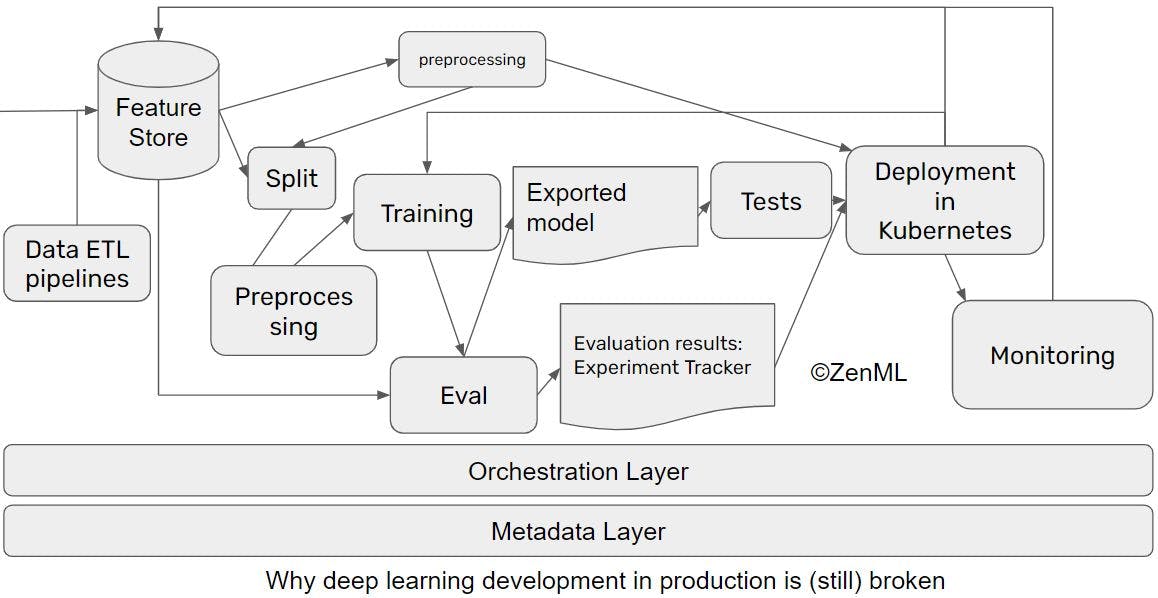

Why ML in Production is (still) Broken and Ways we Can Fix it

by

February 1st, 2021

Launched stuff on ProductHunt. Open-sourced some things. Developed some nice products.

About Author

Launched stuff on ProductHunt. Open-sourced some things. Developed some nice products.