1,096 reads

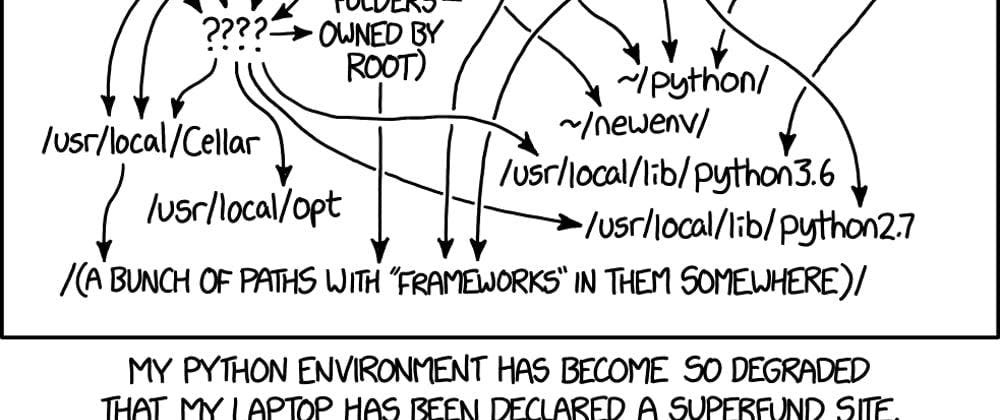

Setting up a Python development environment - The never ending story.

by

May 1st, 2024

Andreas Winschu is Data Engineer working on various Python data projects, having also to deal with MLOps work

Story's Credibility

About Author

Andreas Winschu is Data Engineer working on various Python data projects, having also to deal with MLOps work