405 reads

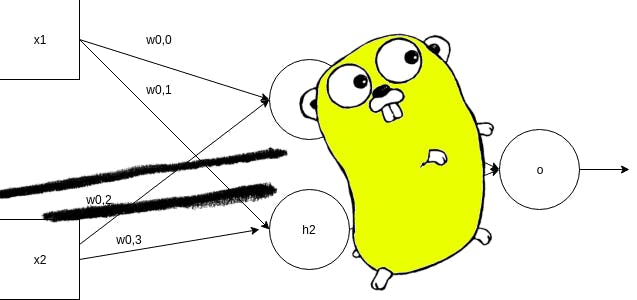

Training Neural Networks with Gorgonia

by

January 11th, 2020

Founder: "pythondrops.com". Full-stack dev/ AI Engineer/ Professional Writer/ M.Sc. Rio de Janeiro

About Author

Founder: "pythondrops.com". Full-stack dev/ AI Engineer/ Professional Writer/ M.Sc. Rio de Janeiro