1,093 reads

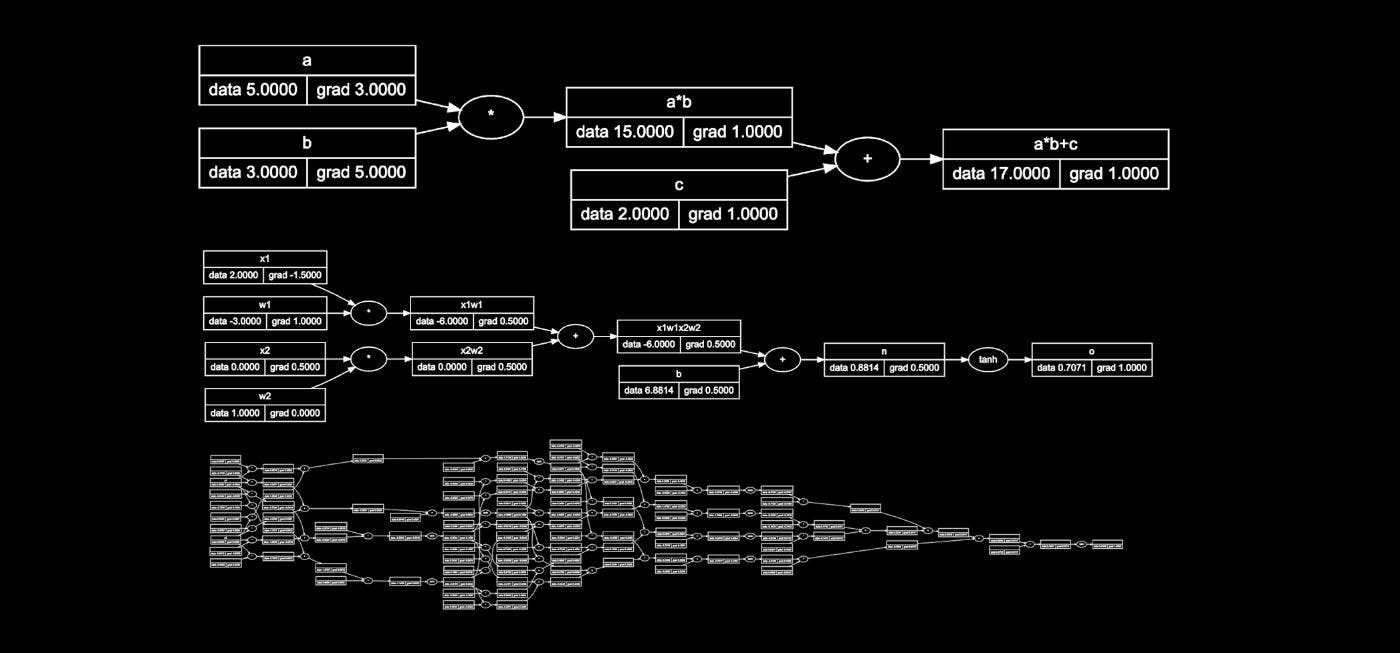

Getting Started with Micrograd TS

by

August 7th, 2023

Audio Presented by

Software Engineer @ UBER. Author of the 100k ⭐️ javascript-algorithms repository on GitHub.

About Author

Software Engineer @ UBER. Author of the 100k ⭐️ javascript-algorithms repository on GitHub.