121 reads

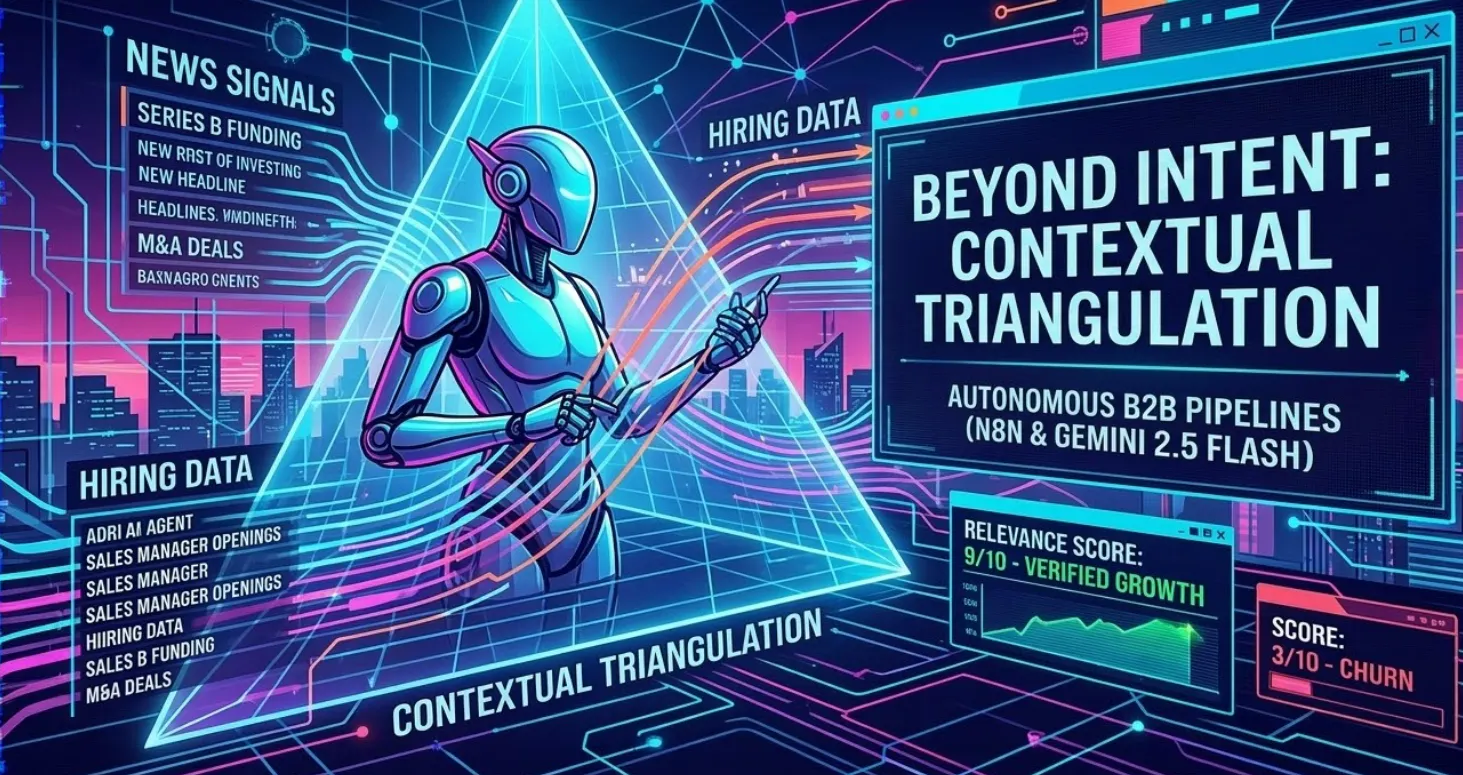

The $0.01 B2B Lead: Engineering an Autonomous SDR Agent

by

March 19th, 2026

AI Growth Lead | $500K round via AI pipelines | 90% data automation. Turning LLMs to ROI

Story's Credibility

About Author

AI Growth Lead | $500K round via AI pipelines | 90% data automation. Turning LLMs to ROI