1,511 reads

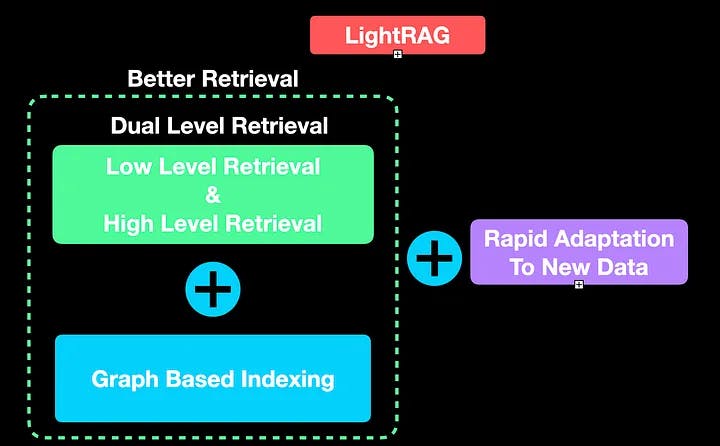

LightRAG - Is It a Simple and Efficient Rival to GraphRAG?

by

November 15th, 2024

Audio Presented by

I am an AI Reseach Engineer. I was formerly a researcher @Oxford VGG before founding the AI Bites YouTube channel.

Story's Credibility

About Author

I am an AI Reseach Engineer. I was formerly a researcher @Oxford VGG before founding the AI Bites YouTube channel.