18,700 reads

How people talk about marijuana on Reddit: a natural language analysis

Too Long; Didn't Read

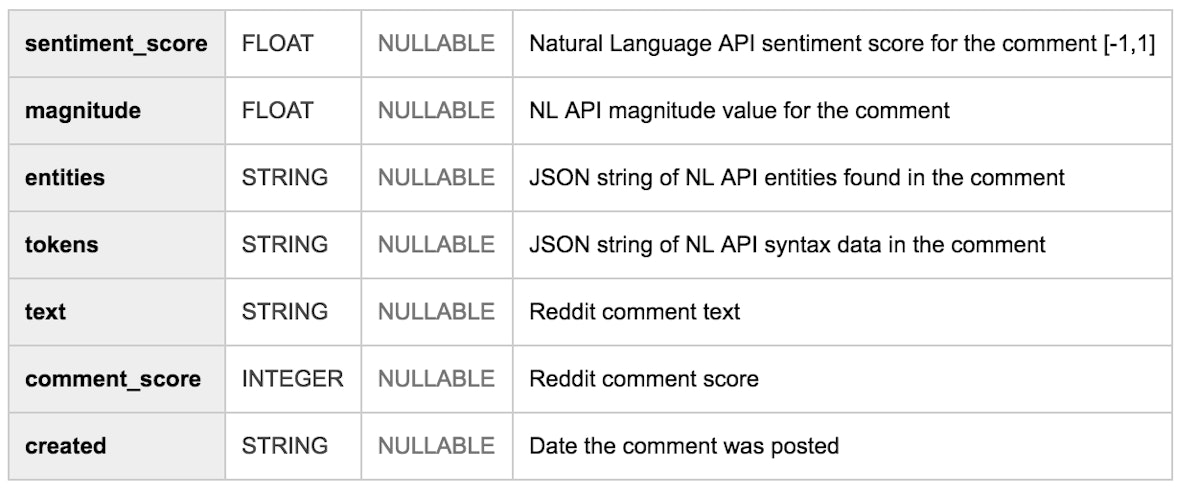

In honor of today being <a href="https://en.wikipedia.org/wiki/420_%28cannabis_culture%29" target="_blank">April 20th</a>, I thought it would be interesting to do some NLP on Reddit comments about marijuana (shout out to <a href="https://twitter.com/yufengg?lang=en" target="_blank">Yufeng Guo</a> for this idea!). My teammate <a href="https://medium.com/@hoffa" data-anchor-type="2" data-user-id="279fe54c149a" data-action-value="279fe54c149a" data-action="show-user-card" data-action-type="hover" target="_blank">Felipe Hoffa</a> has conveniently made <a href="https://bigquery.cloud.google.com/dataset/fh-bigquery:reddit_comments?pli=1" target="_blank">all Reddit comments available in BigQuery</a>, so I took a subset of those comments and ran them through Google’s <a href="https://cloud.google.com/natural-language/" target="_blank">Natural Language API</a>.L O A D I N G

. . . comments & more!

. . . comments & more!