138 reads

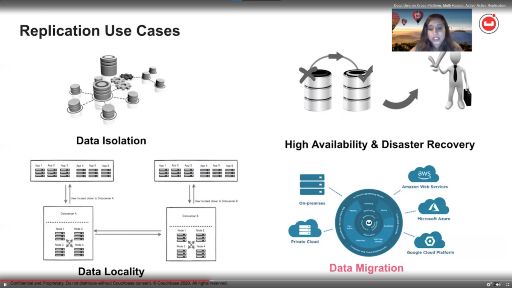

Dealing With Replication, High-Performance Queries And Other Data Platforms Challenges

by

November 15th, 2020

Data tech writer, geogeek, product management and marketing. #nosql #bigdata #analytics #geospatial

About Author

Data tech writer, geogeek, product management and marketing. #nosql #bigdata #analytics #geospatial