1

Poll of the Week

Should internet users be required to verify themselves using a government-issued ID?

US lawmakers in the past have floated the idea of having internet users verify their ages with ID and/or a consent form from their parents to use a service, like social media. Is that something you would be in the favor of?

1

2

3

4

5

Trending Companies

Microsoft (microsoft.com)

+675.00%

since 1975

1

Tesla (tesla.com)

+250.00%

since 2003

2

Instagram (instagram.com)

+51.57%

since 2010

3

ThoughtWorks (thoughtworks.com)

+79.35%

since 1993

4

MageComp (magecomp.com)

-56.59%

since 2014

5

Programming

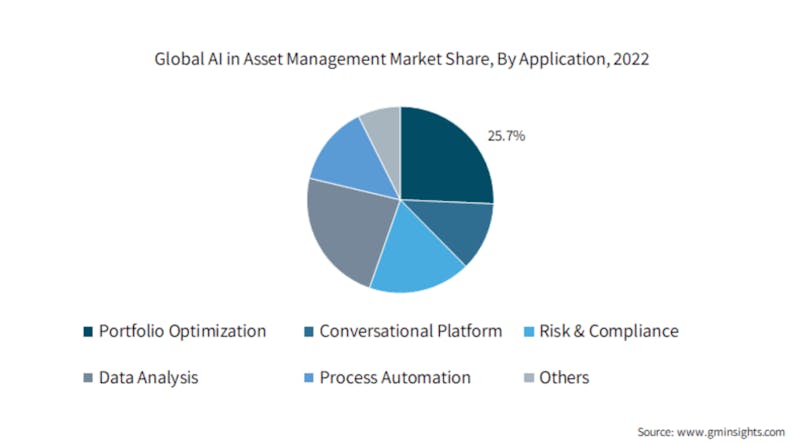

Data Science

Finance

Futurism

Gaming

Hackernoon

Life Hacking

536 reads

7 comments

100+ reads

100+ reads

Management

Business

Society

Media

Machine Learning

Cybersecurity

Web 3

Product Management

Science

Startups

Remote Work

Tech Companies

Tech Stories

Writing

Cloud

Other stories published today

Leveraging LLMs for Generation of Unusual Text Inputs in Mobile App Tests: Abstract and Introduction

Writings, Papers and Blogs on Text Models

Discard Manual Scheduling With DolphinScheduler 3.1.x Cluster Deployment

Zhou Jieguang

How Pyth Price Feeds Transform Morph's DeFi Landscape

Ishan Pandey

Gianluca Sacco Unveils VALR's Grand Slam Trading Incentives: A New Era in Crypto Futures

Ishan Pandey