203 reads

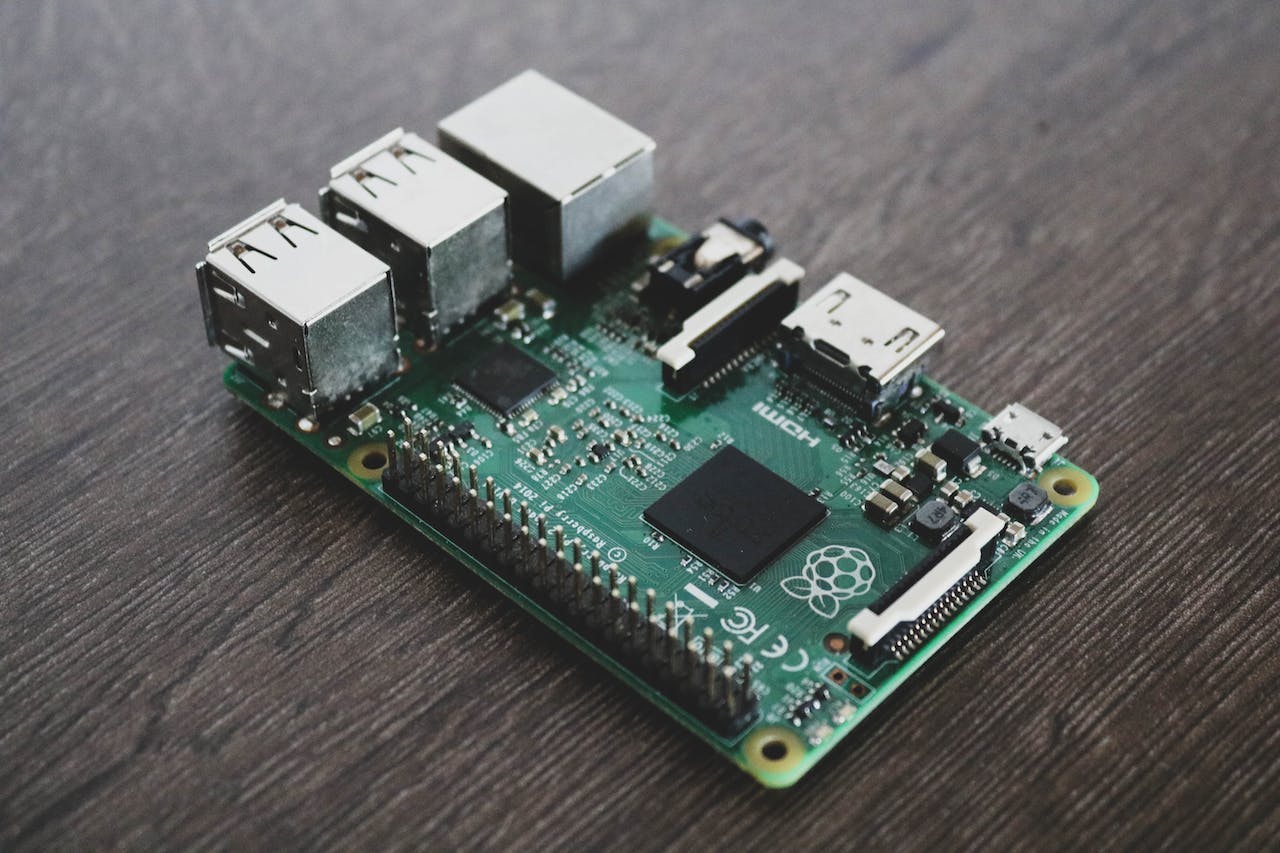

Using Raspberry Pi to Migrate GitHub Runners to Self-Hosted Ones

by

March 14th, 2024

Audio Presented by

Dev Advocate | Developer & architect | Love learning and passing on what I learned!

Story's Credibility

About Author

Dev Advocate | Developer & architect | Love learning and passing on what I learned!